09/02/26

I was speaking to a few friends in the fashion industry recently and it sent me down a bit of a rabbit hole. Not because I’m anti-AI. I use AI every day as a freelance 3D technical designer. It’s genuinely useful, and in the right places it removes friction.

But I keep coming back to the same uncomfortable question.

Are we choosing speed over quality yet again?

Fashion has a habit of doing that. We optimise for pace, we chase output, we trim timelines until there’s nothing left to trim. And when the industry finally started taking 3D seriously, it felt like a rare moment where the conversation shifted. Better fit. Fewer physical samples. Clearer communication. More confidence before you cut cloth.

Then AI image generation arrived and the message became: “I can do that… but faster.”

The issue is not that AI can’t produce beautiful visuals. It can. The issue is that visuals are not the same thing as a product.

Let’s say this clearly, because it gets muddled in a lot of conversations.

AI image generation is brilliant for creating marketing-style imagery quickly. Retailers are already using it for speed and cost, and that is not speculation. Reuters reported Zalando cutting image production time from weeks to days and reducing costs by 90%. Reuters also reported Zara adopting AI to generate imagery using real models, stating it was intended to complement existing processes.

That is the “fast” use case. It is real, it is happening, and it makes sense for certain teams.

But a faster image is not automatically a better decision.

A marketing image cannot tell you:

And this is where I worry we are about to repeat the same old pattern: we get dazzled by speed, then we deal with the consequences later, usually in sampling, QC, returns, and customer disappointment.

AI can generate a convincing garment visual, but that does not mean the garment exists in a production sense.

Where is your production-ready pattern?

Where is your grade?

Where is your spec logic?

Where is your proof that it fits a body, not a prompt?

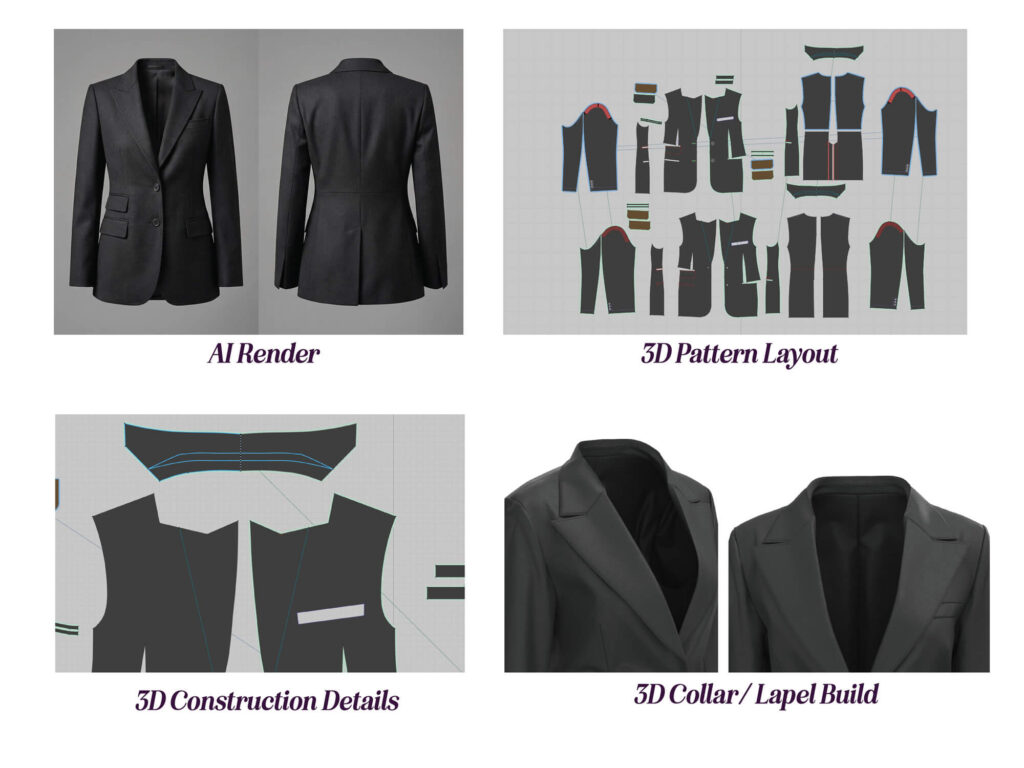

The AI render (top left) creates instant confidence. The silhouette looks resolved, the fabric reads as premium, and from a marketing point of view it does its job. But the pattern layout (top right) is where the garment becomes real, because it shows there is an actual build behind the image, not just a visual impression.

The close-ups (bottom row) are the part most people skip, but they are where “production-ready” lives. Collar and lapel geometry, roll line behaviour, seam placement, and edge finish decisions are what determine whether this blazer can be manufactured consistently and fit as intended. In short: the render can persuade, but the pattern and construction details are the proof.

If anything, AI visuals can create a dangerous confidence gap. A team sees something that looks finished, so the design feels “resolved”. But later, when you try to engineer it into reality, you discover that half the details were never properly vetted.

This is exactly why I still see 3D as so valuable, when it’s used properly.

3D is not just for making something look nice. It is a decision-making environment.

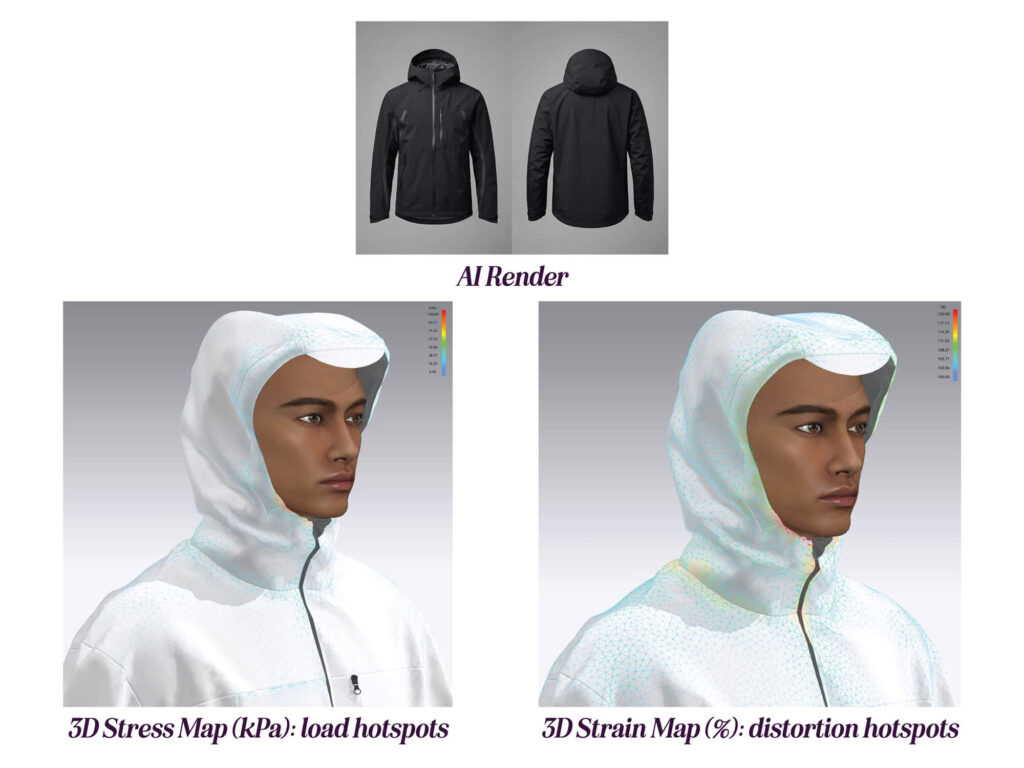

A render can show style, but fit maps show behaviour. Here, strain (%) highlights where the material is distorting most, and the hotspot sits at the lower hood opening near the chin/jaw. Stress (kPa) highlights where force is concentrating, and it clusters around the hood opening and neckline. When both maps agree on the same zone, it’s a strong signal that the pattern shape, balance, or allowances in that area need a second look before moving forward.

The Interline described 3D virtual sampling as a communication tool, a platform teams can use to exchange comments and ideas with business partners, and to reduce lead time and sampling when it is embedded in workflow. That framing matters, because it puts 3D where it belongs: not as “pretty pictures”, but as an engine for alignment.

If your only goal is to generate nice visuals for a concept deck, AI might genuinely be the more efficient route.

But if that is your main relationship with 3D, I’ll be honest, I think you are missing the point.

The real value of 3D is what happens before the final image:

The Interline has been vocal about the wider digital transformation challenge in fashion and the pressure on brands to maximise the value of digital talent, tools and assets. That is the heart of this conversation for me. If we treat 3D as an image-making tool, and then replace it with AI because AI is faster, we are not transforming anything. We are just swapping one output method for another, without building a more robust product process.

Another part of this discussion that gets glossed over is the effort.

Yes, AI can be quick. But getting a specific, consistent, on-brand, technically plausible outcome can take a lot of refinement. Prompting, iteration, image editing, re-rolling details, correcting hands, correcting seams, correcting proportions, correcting styling logic.

Even when the image is stunning, you are still left with the production questions.

And if you still need to create patterns, sample, fit, and QC in the usual way, then what have you actually reduced?

You might have sped up the “look” phase. But you have not removed the risk.

This is the part I care about most.

My worry with AI image generation is not just “will the garment work in production”. It’s what the tool encourages culturally.

It can push teams toward:

And we need to be honest about what that leads to. More noise, not more value.

If we want to talk about sustainability and quality, we have to talk about decision quality. Not just material choice. Not just marketing language. Decision quality is what determines whether a product earns its place in someone’s wardrobe.

Do customers want ten versions of a style that was never properly vetted?

Or do they want one product that fits well, feels considered, and lasts?

I know which camp I’m in.

I’m not advocating for ignoring AI. I’m advocating for using it where it strengthens the pipeline rather than replacing it with a shortcut.

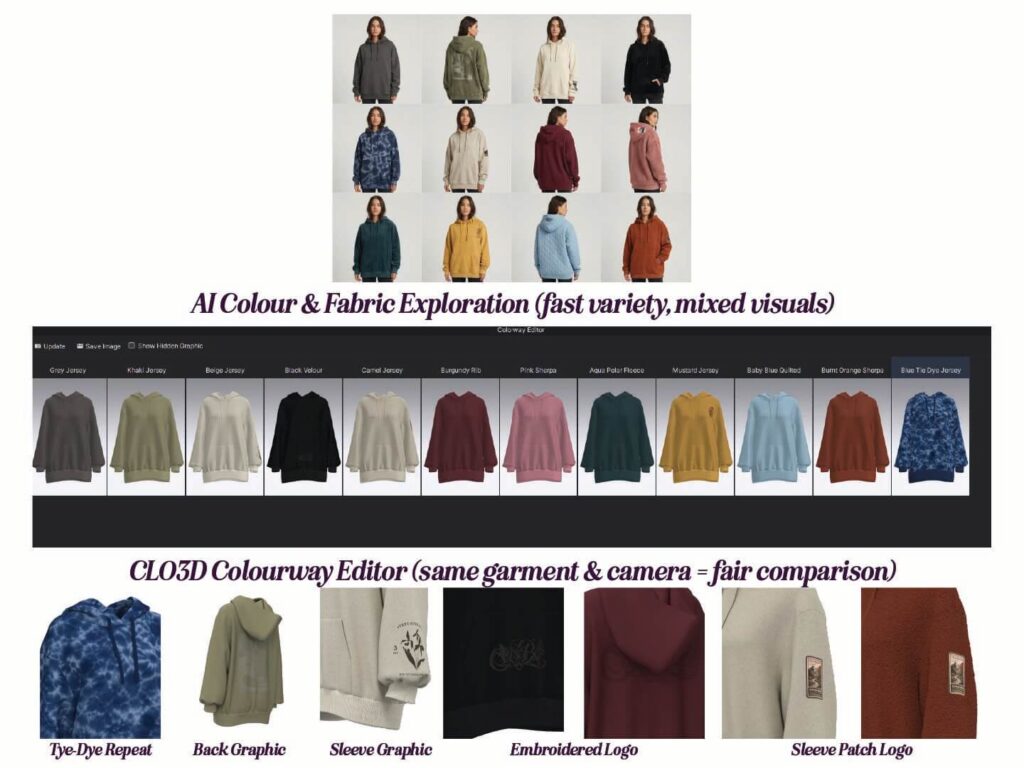

Colourways are where teams can unintentionally drift back into speed-first decision-making. AI can generate variety fast. 3D helps you keep control. You’re not just switching colours. You’re validating fabric behaviour, trim visibility, and branding placement on the same garment, under the same view, so decisions are based on evidence rather than aesthetic momentum.

Here are the AI use cases I feel good about:

The bigger industry conversation is heading that way too. Business of Fashion has highlighted both the rapid prioritisation of generative AI and the fact that many companies are still early in applying it directly to design and product development. More recently, BoF also pointed to AI reducing costs and reshaping routine work, with examples like Zalando using generative AI across functions and reporting major cost reductions.

That is the reality. AI is not going away.

So the question becomes: can we adopt AI without losing the discipline that 3D was starting to bring back into product development?

Here is my bottom line.

If AI helps you communicate faster, great.

If AI helps you remove repetitive admin, even better.

If AI helps you test and learn without wasting physical resources, brilliant.

But if AI is being used to skip the hard parts, the production parts, the truth parts, then we are heading for trouble.

Because fashion doesn’t need more output. It needs better decisions.

And for fit, construction, grading, and production readiness, 3D is still one of the strongest decision environments we have, when it’s used as more than a rendering tool.