20/02/26

I attended the CLO x Epic Games event in London this week, and it was one of those evenings that leaves you thinking about workflow, not just tools.

If you’ve read my recent blog on AI vs 3D, you’ll know where I stand. I am not against AI at all. I just care about what you can prove, not only what you can show.

Here are the highlights that stuck with me, plus why I think they matter for brands, educators, and anyone trying to build real capability in digital fashion.

Brian King from CLO3D spoke about the AI and 3D comparison in a way that felt very aligned with what I have been writing about recently.

One demo used “Nano Banana” to produce a stunning AI garment visual. It looked incredible. But the key point was simple:

It’s still only an image.

An AI image might look convincing, but it cannot tell you:

The analogy that landed for me was this: it’s like presenting a finished meal without the ingredients. You can admire it, but you have no substance behind it.

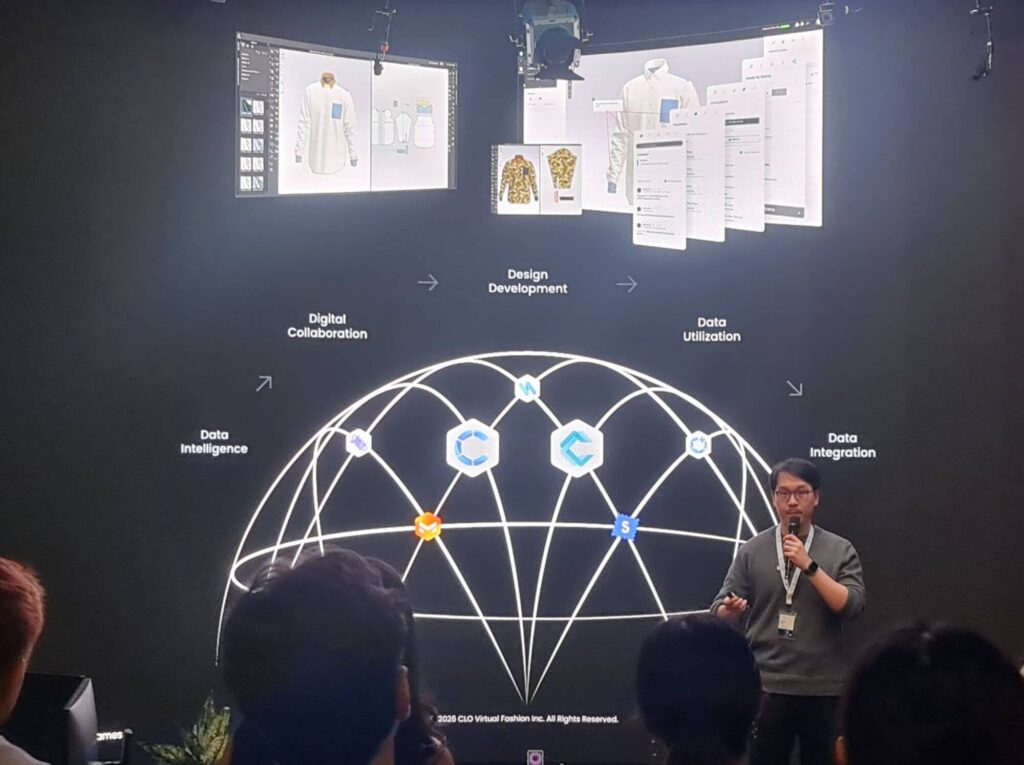

This is exactly where CLO fills the gap. It connects visuals to something you can measure, test, and refine.

The CLO team also shared updates on Unreal Engine and demonstrated how smoothly the CLO plug-in workflow is becoming.

The tool that really caught my attention was Parametric Cloth.

The promise here is huge: the ability to resize a garment to any MetaHuman. In other words, you are not rebuilding everything from scratch each time you change the body.

I am looking forward to testing this properly because if it performs well, it could support:

This is the kind of development I like to see. Practical improvements that reduce friction, not just new features that look impressive in a demo.

The panel featured Julien Blockschmidt (3D Artist), Yao Yao (Vivienne Westwood), and Kathy McGee (Central Saint Martins), moderated by Costas Kazantzis from Fashion Innovation Academy.

It was genuinely informative, especially because it included both industry and education perspectives.

Kathy spoke about embedding digital fashion into courses, with students working with CLO and Unreal as part of a five-week project. That is a strong step forward.

But it was also mentioned that these are not digital-only projects, which means students do not get a lot of time to build fluency in the software.

That is a shame, because confidence in 3D comes from time in the tools. That said, I do believe it’s important that students still learn manual processes too. The best outcomes come from understanding both, not replacing one with the other.

Yao Yao shared how Vivienne Westwood are using 3D for design visualisation, checking complex fit, and testing fabrics and colours.

This is exactly the point of 3D in my opinion. It’s not about “making it look nice”. It’s about making decisions earlier and with more clarity.

One of the most thought-provoking parts of the panel was the discussion around how students are becoming more interested in developing their own tools and systems.

That leads naturally into hybrid workflows, including AR and VR. The idea is to step away from the computer screen sometimes and get back to being more hands-on in how we review and collaborate.

I like this concept a lot, as long as it genuinely improves decision-making.

One of the best parts of any event is always the people.

It was great to speak to fellow educators and hear what is working in their courses. London College of Fashion came up in conversation, and I loved hearing about their approach of running a digital and manual project simultaneously. That kind of structure makes digital learning feel purposeful, not like an add-on.

I also enjoyed catching up with 3D creators and meeting new ones. Hearing what people are building, and how they are applying these tools in the real world, is always the best reality check.

One of my current interests is exploring how VR and AR could support 3D fashion development.

I have been a bit apprehensive, mainly because I often find AR and VR a lengthier process compared to simply developing inside 3D software.

But I can absolutely see where this could become valuable, especially later in the development process when you are:

If VR and AR can support clearer approvals and reduce misunderstandings, I think they will earn their place.

AI garment visual

A generated image that can look realistic, but does not contain the data needed for fit, pattern accuracy, construction feasibility, or grading.

3D garment simulation (CLO)

A digital garment built from pattern pieces with measurable properties and construction logic. This is where you can test fit, proportion, balance, seam placement, and iterate with evidence.

Unreal Engine

A real-time 3D engine (often used for games, film, and interactive experiences). In fashion, it’s used for high-end real-time visualisation and digital experiences.

CLO plug-in (CLO to Unreal workflow)

A workflow connection that supports moving CLO garments into Unreal more efficiently, helping teams iterate without constant manual exporting and rebuilding.

MetaHuman

Epic Games’ high-quality digital human characters used in Unreal. Think of these as advanced digital “models” that can support realistic visualisation.

Parametric Cloth

A tool/workflow concept that allows a garment to be resized or adapted to different bodies (such as different MetaHumans) without rebuilding the garment from scratch each time.

AR (Augmented Reality)

Digital content overlaid onto the real world, usually viewed through a phone, tablet, or headset.

VR (Virtual Reality)

A fully immersive digital environment viewed through a headset. For fashion, it can support spatial review and collaborative decision-making, if implemented with purpose.

This event reinforced something I keep coming back to.

If you are using 3D only for visuals, you are missing the point.

The real value is evidence. Fit. Construction. Feasibility. Clearer decisions upstream.

If you’re exploring CLO, Unreal, or real-time workflows and want to sanity-check where to start (and what is actually worth your time), feel free to reach out. I’m always happy to talk workflow, training strategy, and practical implementation.